The World Wide Web has evolved into a medium of documents, which are, on a semantic level, readable or interpretable by human beings rather than by computers. Those WWW-documents contain information only in a semi-structured form, expressed in languages like HTML or XML. For a meaningful contextualisation and processing it is still necessary to refine the data intellectually to create information.

The idea of the Semantic Web is to add meta-information to every single document to specify the meaning of the document itself as well as the relation to its linked documents. In this process, meaning is generated through contextualisation. Ontologies or ontology languages serve as a grammar for logical connections. The resulting meaningful infrastructure can be harvested and indexed by automated Webservices. The Semantic Web is organised in so called triples, each consisting of entities and their relations to each other. This structure reminds of the triadic models of sign processes, which are used in modern semiotics. But this similarity is not only about the outer appearance. Semiotics deals with the question how meaning is generated, so there is an obvious potential to offer a basis for the understanding of concepts like information, meaning, cognition and communication and its adaptability in the challenge of designing a web, which consists of meaningfully linked data.

The American philosopher Charles Sanders Peirce (1839-1914) designed several models of the sign process or, as he called it, the semiosis, which were established on the basis of his three universal categories: Firstness, Secondness and Thirdness. Everything, which is univocal and regardless of anything else is Firstness. This is the category of feeling, pure quality or possibility. An example of Firstness is the quality of a colour. There may be a different perception of it, but its existence is unique and without reference to anything else. The Secondness is the category of two entities or in general the category of relations or reaction without any law or reason. The human consciousness, for example, deals with reactions between the inside and the outside world. The Thirdness is the medial element between two entities. Thirdness originates, when a there is a relation between two things, which is connected with thinking and/or the creation of a habit. This is the category of representation, habit, communication/mediation, law and sign processes, which does not mean, that signs are not relevant for the first two categories. Like in Leibniz' philosophy for Peirce every thought is connected with signs. Peirce concept of signs is very broadly conceived. For him every thought is a sign and every sign shows a triadic structure. The Peircean philosophy offers a basis for a broad appliance to diverse fields, due to its universal character. This elaborated sign theory was further developed into modern semiotics, but still its potential has not been fully utilised.

The Danish biologist, cybernetician, semiotician and philosopher Søren Brier developed a respective approach in his opus magnum Cybersemiotics (2008) in order to bring the Peircean semiotic approach up to date, amongst other things, to integrate it with the developments in system science, cybernetics, pragmatic linguistics and documents management that have developed since Peirce's death. In this work (which had a new edition 2010) he elaborates a framework for the integration of (bio)semiotic, (second-order) cybernetic and system theories into a universal foundation for information, cognition and communication sciences. Brier is Professor in Semiotics of Information, Cognition and Communication Sciences at the Centre for Language, Cognition and Mentality at Copenhagen Business School and has worked more than ten years at the Royal School of Library and Information Science in Denmark. In Cybersemiotics he uses the Peircean classification of signs to reach an understanding of the process of indexing, which is one of the essential works in the field of Library and Information Science (LIS). During the practice of indexing the indexer assigns a descriptor to a document, which creates an act of representation, because the descriptor stands for the document in the information retrieval process.

To outline the potential of semiotic theories for Information Science we asked Søren Brier to explain the crucial points of his transdisciplinary approach.

Professor Brier, how did you develop interest in LIS? Why did you choose semiotics as your area of praxis?

The simple answer is that it was difficult to find a job, when I finished my work in biology and psychology with an interdisciplinary philosophy of science focus. Partly because I was already too interdisciplinary to fit into the university system in the early 80's and partly because we had a government that cut funds to the university. I was then offered a job at the Royal School of Library and Information Science in the area of librarianship in science and technology and philosophy of science. Here I could further develop my interest in the two culture problem as the computer systems in library document systems clearly was a natural and technical type of rationality instrument, but was supposed to serve human interests of knowledge and leaning search. As I came to know more of the area, it became clear that no transdisciplinary theory had been developed for the area of LIS and that the use of semiotic theory was a very rare thing. I began developing this connection through my study of C.S. Peirce's triadic transdisciplinary pragmaticist theory of semiotics.

Is it necessary to update or extend the theories of Peirce for an actual appliance to the area of Information Science?

Yes, this is what I attempt to lay the foundation for in my habilitat book Cybersemiotics: Why Information is not enough. A theory of signification and how meaning is produced through signs is needed to connect human consciousness with a theory of nature and information. For this we need to enlarge the picture by superimposing and integrating an even broader foundation such as Charles Sanders Peirce's pragmaticistic semiotics in its modern development as biosemiotics as not least the understanding of the role of the body in meaningproduction has become more and more crucial also in cognitive science and linguistics. The first groundwork explaining why and how a combinatory framework of semiotics and cybernetics can make it possible to construct an evolutionary based transdisciplinary theory of information, cognition, consciousness, meaning and communication can be found in some of my early articles to the CoLIS [Fn 01] conferences 2 and 4.

In your book Cybersemiotics you propose a universal semiotic Information Science, which integrates multiple theories of cognition and communication. How do you see the relationship of this universal Information Science to the current state of Information Science?

My theory of Cybersemiotics is going beyond the current state of Information Science in merging a cybernetic informational approach without semantics with a Peircean (bio-) semiotic approach build on a special kind of phenomenology called phaneroscopy that is based in the Firstness of feeling, pure mathematical form and qualia, which are the basis of meaning creation. The theory is a unique transdisciplinary paradigm with a special ontological theory that is developed in the paradigmatic book: Cybersemiotics: Why information is not enough and will be developed further in a coming book with the working title Tohu Bohu: The non-dualist approach behind Cybersemiotics, which I am working on these years.

The reason for this endeavour is that the current state of Information Science is very complex, as there are so many kinds of information concepts and many of the types of Information Sciences have not made their implicit ontologies and epistemologies clear. Thus they often have incompatible paradigms that generate incommensurable types of knowledge communications creating confusion. This is unfortunately a confusion that many people do not seem to be aware of it and talk like we have one unified Information Science.

What are the differences between the diverse concepts of information? The philosopher Rafael Capurro denied in his „trilemma”, that a unified theory of information is feasible, because he refused the possibility of synonymy, of equivocation as well as of analogy of the different concepts of information. What do you think?

Rafael, whom I know well, sees some of the same problems I see. Many researchers in LIS work with a concept of information that include a semantic aspect and often have a social-psychological foundation. The problem here is that this does not fit in with the way the information theories of Claude Shannon and Norbert Wiener are founded on objective mathematical conceptions without any natural semantic aspects. Others found their information concept in a general system theory, like Wolfgang Hofkirchner's Unified theory of Information (UTI), based on the principle of self-organization and emergence. These approaches are not really compatible with Claude Shannon and Norbert Wiener or even Gregory Bateson's further development of Wiener's concept of information, because Wiener and Bateson's paradigms are not based on an organistic, but on a merger between mathematical and thermodynamical concepts of entropy. Even Shannon and Wiener's theories are not compatible, as Shannon sees information as entropy, where Wiener and Erwin Schrödinger - who developed his theory into biology - defined information as neg-entropy. Even this difference many researchers overlook in order to create a unified information theory. But unified theories are not possible if basic differences in the paradigms are overlooked. Good philosophy of science analysis is necessary.

Finally there are pure computational-informational approaches to transdisciplinary information theories. Some are based on the Turing paradigm and the information processing paradigm, which believe that human brains and organizations process information like software programs in computers do. A lot of cognitive science was based on such a paradigm. But it lacks the first person phenomenological approach of human experiential consciousness as the basis for meaning production in the subject as well as intersubjectively. Meaning has been a problem in cognitive science, psychology and linguistics by the same reason. The computational in this form is far from human understanding of meaning in natural language and social interchange.

Others researchers and practitioners work with pan-computational and informational paradigms, where they develop concepts of natural computation that goes beyond the Turing definition of algorithmic computing and therefore also have an enlarged concept of the concept of a computer. On this basis they view the human brain, social organizations, as well as the whole natural world as computers. This pan-information and computational view is for instance promoted by Gordana Dodig-Crnkovic (ICON). See the recent book she has edited with Mark Burgin (2011): Information and Computation on World Scientific.

The problem with this new paradigm in my view is that science is about the world without human consciousness. It can deal with the brain, but not with first person experience. Therefore it cannot explain human consciousness and its production of meaning in society.

Then there is as mentioned earlier the Unified Theory of information (UTI) by Wolfgang Hofkirchner based on Ludwig von Bertalanffy's general system theory in combination with a theory of self-organization going from nature into social system theory. You find readings about that in the TripleC journal [Fn 02] on the net. Hoffkirschner has recently tried to integrate semiotics into his theory.

Furthermore there is a paradigm like Niklas Luhmann's system theory, which is based on an integration of general system theory, George Mackey's and Bateson's theory of information as a difference that makes a difference, plus a generalized version of Humberto Maturana and Francisco Varela's theory of autopoiesis and furthermore an attempt to add aspects of Edmund Husserl's phenomenology. In my view this theory, which is based on operational constructivism, is the most advanced. But its ontology is not clear and its integration of phenomenology does not work well. [Fn 03]

I do not believe that all these paradigms are compatible into an unified Information Science presently and I think they do not have a decent scientific definition of the first person experiential aspect of reality and therefore cultural intersubjective meaning. Such a basis can be found in Charles Sanders Peirce's pragmatic and triadic theory of semiotics based on a transdisciplinary and evolutionary ontology in a unique way. But it is a bit old on biological science, cybernetics and self-organization.

Cybersemiotics is an attempt to put Luhmann's theory inside the Peircean epistemological and ontological framework and cure the illness of both paradigms as well as the general problems of the previous attempt to make a unified theory of information.

Then there is also the problems of the pure constructivist conceptions of knowledge and science that on the other hand loose the connection to any kind of objective truth function and therefore the core of the natural and technical sciences. I think that Peirce's pragmaticistic philosophy of science and meaning can solve that problem too. You can find an article by me on that problem in the net-journal Constructivist Foundations. [Fn 04]

Could you briefly explain the Cybersemiotic Project?

Cybersemiotics is the attempt to provide a transdisciplinary framework for the scientific and scholarly work on information, cognition and communication coming from the natural, technical and social sciences as well as the humanities. It builds on two already generated interdisciplinary approaches: On the one hand cybernetics and systems theory including information theory and science, and on the other hand Peircean semiotics including phenomenology and pragmatic aspects of linguistics. Cybersemiotics attempts to make the two interdisciplinary paradigms - both going beyond mechanistic and pure constructivistic ideas - complement each other in a common framework.

What makes Cybersemiotics different from other approaches attempting to produce a transdisciplinary theory of information, cognition and communication is its absolute naturalism, which forces us to view life, consciousness as well as cultural meaning as a part of nature and evolution. Thus it aims to combine a number of different platforms from which it attempts to make universal theories of perception, cognition, consciousness and communication have been made, by relativizing each of them as only a partial view:

- The physico-chemical scientific paradigm based on third person objective empirical knowledge and mathematical theory, but with no conceptions of experiential life, meaning and first person embodied consciousness and therefore meaningful linguistic intersubjectivity.

- The biological and natural historical science approach understood as the combination of genetic evolutionary theory with an ecological and thermodynamic view based on the evolution of experiental living systems as the ground fact and engaged in a search for empirical truth, yet doing so without a theory of meaning and first person embodied consciousness and thereby linguistic meaningful intersubjectivity.

- The linguistic-cultural-social structuralist constructivism that sees all knowledge as constructions of meaning produced by the intersubjective web of language, cultural mentality and power, but with no concept of empirical truth, life, evolution, ecology and a very weak concept of subjective embodied first person consciousness even while taking conscious intersubjective communication and knowledge processes as the basic fact to study (the linguistic turn).

- Any approach which does not take the qualitative distinction between subject and object as the ground fact on which all meaningful knowledge is based, which is why it considers all results of the sciences, including linguistics and embodiment of consciousness, as secondary knowledge, as opposed to a phenomenological (Husserl) or actually phaneroscopic (Peirce) first person point of view considering conscious meaningful experiences in advance of the subject/object distinction.

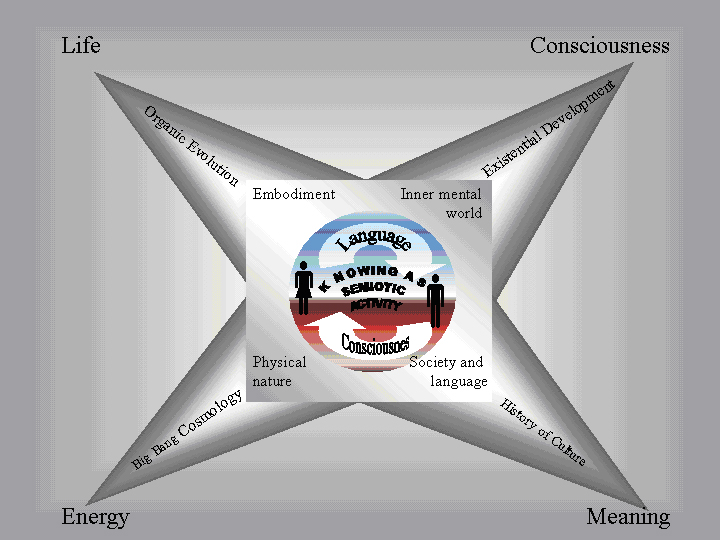

Here is an overview in this central model:

Figure 1: The Semiotic Star: A model of how the communicative social system of the embodied mind produces four main areas of knowledge. Physical nature is usually explained as originating in energy and matter, living systems as emerging from the development of life processes (for instance, the first cell). Social culture is explained as founded on the development of meaning in language and practical habits, and finally our inner mental world is explained as deriving from the development of our individual life world and consciousness. But all these types of knowledge have their origin in our primary semiotic life-world and the common sense we develop here through our cultural history (horizont). In the course of this development the results of the natural and social sciences as well as humanities feed into our common sense horizon and expands it.

The aforementioned information processing paradigm is characterised by the lack of an approach of human experiential consciousness and thus of the semantic dimension. In the current development of the WWW into the Semantic Web (which is briefly described in the introduction) we have the inverse problem: The semantic level of digital contents is more readable or interpretable by human beings rather than by computers and therefore the aim of contextualisation is hard to implement. How would you appraise this development?

A common view among information theorists is that information integrated with entropy in some way is a basic structure of the World. Computation is the process of the dynamic change of information. In order for anything to exist for an individual, it must get information on it by means of perception or by re-organization of the existing information into new patterns. This cybernetic-computational-informational view is based on a universal and un-embodied conception of information and computation, which is the deep foundation of "the information processing paradigm". This paradigm is vital for most versions of cognitive science and its latest developments into brain function and linguistic research. Taken to its full metaphysical scope this paradigm views the universe as a computer, humans as dynamic systems producing and being guided by computational functioning. Language is seen as a sort of culturally developed algorithmic program for social information processing.

What seems to be lacking is knowledge of the nature and role of embodied first person experience, qualia, meaning and signification in the evolution and development of cognition and language communication among self-conscious social beings and formed by the grammatical structure and dynamics of language and mentality. From a general epistemological as well as philosophy of science foundation, it is argued that a transdisciplinary paradigm of information, cognition and communication science needs, within its theory, to engage the role of first person conscious, embodied social awareness in producing signification from percepts and meaning from communication in any attempt to build a transdisciplinary theoretical framework for information, cognition, signification and meaningful communication. It has to embrace what Peirce calls cenoscopic science or, to use a modern phrase, intentional sciences. If it does not do so, but bases itself on physicalism, including physicalistic forms of informationalism such as info-computationalist naturalism, it is going to be difficult to make any real progress in the understanding of the relation between humans, nature, computation and cultural meaning through an integrated information, cognition and communication science.

Thus documents on the net are now searchable primarily in natural language based on national and situated meaning. My Danish English is different from your German English and we further differentiate between American, British, South African, Canadian English to mention a few. Soon most of the documents will be in Mandarin. Automatic translators are becoming better, but there is great room for improvement and the demands on translation for producing reliable search terms is huge; even if we keep to English the usage of words will be ambiguous, subject and situational dependent. These are three aspects computers do not handle well. Documents need a systematic indexing based on a knowledge ontology to be searchable in a systematic fashion. That would supplement the natural language angle on meaning searching and improve effectively very much. The only problem is that it cannot be done on an acceptable level by machines and the cost is too high when done by humans for most purposes. Further systematically constructed ontologies are frozen structures of meaning, which as time goes by will be increasingly different from the meanings of natural languages that evolves all the times based on social dynamics and history.

The generation of meaning through contextualisation characterises the Semantic Web. How can, in your opinion, the Peircean concept of semiosis be adapted to this process?

A major problem from a Peircean point of view is that there is no life as such in the system itself on the net. The spontaneous intertextuality on the net is very limited as the text or concepts have no way of interacting themselves. The browsers make relations to the texts through its algorithms and you can get some kind of pragmatic meaning of concepts that way. But that is neither an embodied or culturally pragmatic determined meaning as in normal human linguistic interaction. It is in another un-embodied virtual universe.

I recently had a discussion on the question to which of Peirce's universal categories one could assign the structure of the Semantic Web. What do you think?

I think that internal in the computers and their webs we do not have actual triadic semiotic structures as they are defined in Peircean triadic pragmatic semiotic theory. We are in the informational dualistic level, which could be called pre-semiotic or Quasi-semiotics. [Fn 05] It is the embodied phenomenological understanding that makes all the difference. Peirce's evolutionary metaphysics has a phenomenological point of departure, but he frames the task differently from Husserl as well as from Hegel. Thus, it is most relevant to hold on to the name Peirce invented for his own stance: phaneroscopy. To me, there is a basic problem in modern European phenomenology from Husserl and onward, viz that when we talk about phenomenology, we cannot get to the world of the others and to the world of objects as they hardly have any existence outside our own consciousness. This is because it deals with a certain view of the pre-linguistic consciousness before any distinction between object and subject. Peirce, however, tries to solve this problem by introducing his three basic categories of Firstness, Secondness and Thirdness and connecting them to the sign process, thus making a common foundation for cognition and communication that makes his theory intersubjective at the basis. First person experience then does not come from a transcendental subject, but from 'pure feeling', or Firstness. Thus, Firstness must be the un-analysable, inexplicable, unintellectual basis which runs in a continuous stream through our lives and therefore is the sum total of consciousness. Possibility is a good word for Firstness. Existence is an abstract possibility (Firstness), which is no-thing. Peirce equates being and possibility with Firstness, as is clear from these two trichotomies: (1) being, (2) actuality, (3) reality; and (1) possibility, (2) actuality, (3) necessity. Here it is important to understand that the categories are inclusive: you cannot have Secondness without Firstness or Thirdness without Secondness.

Peirce is referring to Hegel's dynamical dialectical thinking as a contrast to Aristotle. Where Aristotle's logic is concerned with separate, discrete phenomena in a deductive pattern, Hegel in his phenomenology dissolves this classical static view into a dynamic movement. This is caused by oppositions between the structural elements that - through their fight with each other - develop towards a new whole, which is usually the whole we have now. It is viewed as preserving the former elements, but now united into a new higher synthesis. This dialectics is a much more organic way of thinking than the more mechanical classical logic. Hegel's term for this overcoming of contradiction at a new level, which at the same time preserves the contradiction on a lower one, is Aufhebung. The concept is sometimes translated as "sublation".

There is a lot of Thirdness in Hegel's phenomenology as well as an intuitive apprehension of the total picture, or Firstness. What is missing from a Peircean point of view is then that healthy sense of reality that Secondness provides in the form of the brute facts on which everyday consciousness and self-conscious experience. This is the reality in the form of dynamical objects that does not flawlessly conform to our expectations, which set out the development as well as evolution of knowing in Peircean semiotic analysis. We have to reflect on what the brute facts say about Thirdness and this is the road to science and meanings in natural languages. Thus Hegel does not - in Peirce's view - see that the difference between Firstness, Secondness and Thirdness as foundational and that there is no way in which one of them can be turned into one of the others, nor does he realize that they cannot melt together into one whole. In Peirce's theory Firstness, Secondness and Thirdness has to be there all three all the time in a dynamical interaction in order to produce meaningful signs and this needs several autopoietic biologically embodied being interacting with an ecological and/or social environment. This is what makes it so complicated and dynamic that it is difficult for computers to mimic.

Professor Brier, thank you very much for the interview.

Fußnoten

[01] International Conference on Conceptions of Library and Information Science. [zurück]

[02] TripleC - Cognition, Communication, Co-operation. Open Access Journal for a Global Sustainable Information Society: http://www.triple-c.at/index.php/tripleC. [zurück]

[03] See for instance Brier, S. (2007): "Applying Luhmann's system theory as part of a transdisciplinary frame for communication science", Cybernetics & Human Knowing, Vol. 14, no. 2-3. p. 29-65 for an argumentation for this view. [zurück]

[04] http://www.univie.ac.at/constructivism/journal/articles/5/1/019.brier.pdf. [zurück]

[05] See professor Winfried Nöth's and professor Mihai Nadine's articles in Cybernetics & Human Knowing. [zurück]

Linda Treude, M.A. hat Bibliothekswissenschaft und Kunstgeschichte an der Humboldt-Universität zu Berlin studiert und ist zur Zeit Mitarbeiterin im Projekt "Dimekon" (Digitales Medienkompetenz-Netzwerk) des Computer- und Medienservices der HU.